ChatGPT has made content summarization a breeze, but accuracy, plagiarism, and data privacy remain concerns.

Summary

Since OpenAI’s OpenAI burst onto the scene at the end of 2022, there has been no shortage of voices calling artificial intelligence—and generative AI in particular—a groundbreaking technology. The media sector, in particular, is grappling with some deep and complex questions about what generative AI (GenAI) might mean for journalism. While it now seems increasingly unlikely that AI threatens journalists’ jobs, as some may have feared, news executives are asking questions about, for example, information accuracy, plagiarism, and data privacy.

To get an overview of the situation in the sector, WAN-IFRA surveyed the global community of journalists, editors, and other media professionals on their newsrooms’ use of generative AI tools newsrooms. 101 participants from around the world took part in the survey; here are some key points from their responses.

Half of newsrooms already work with GenAI tools

Given that most generative AI tools have only become publicly available in the last few months—at most—it is quite remarkable that nearly half (49%) of respondents said their newsrooms use tools like ChatGPT. On the other hand, since the technology is still rapidly evolving and in perhaps unpredictable ways, it’s understandable that many newsrooms feel cautious about it. This may be the case for respondents whose companies have not (yet) adopted these tools.

Overall, the attitude toward generative AI in the industry is extraordinarily positive: 70% of survey participants said they expect generative AI tools to be useful for their journalists e newsrooms. Only 2% say they see no value in the short term, while another 10% are unsure. 18% believe the technology needs more development to become truly useful.

Content summaries are the most common use case

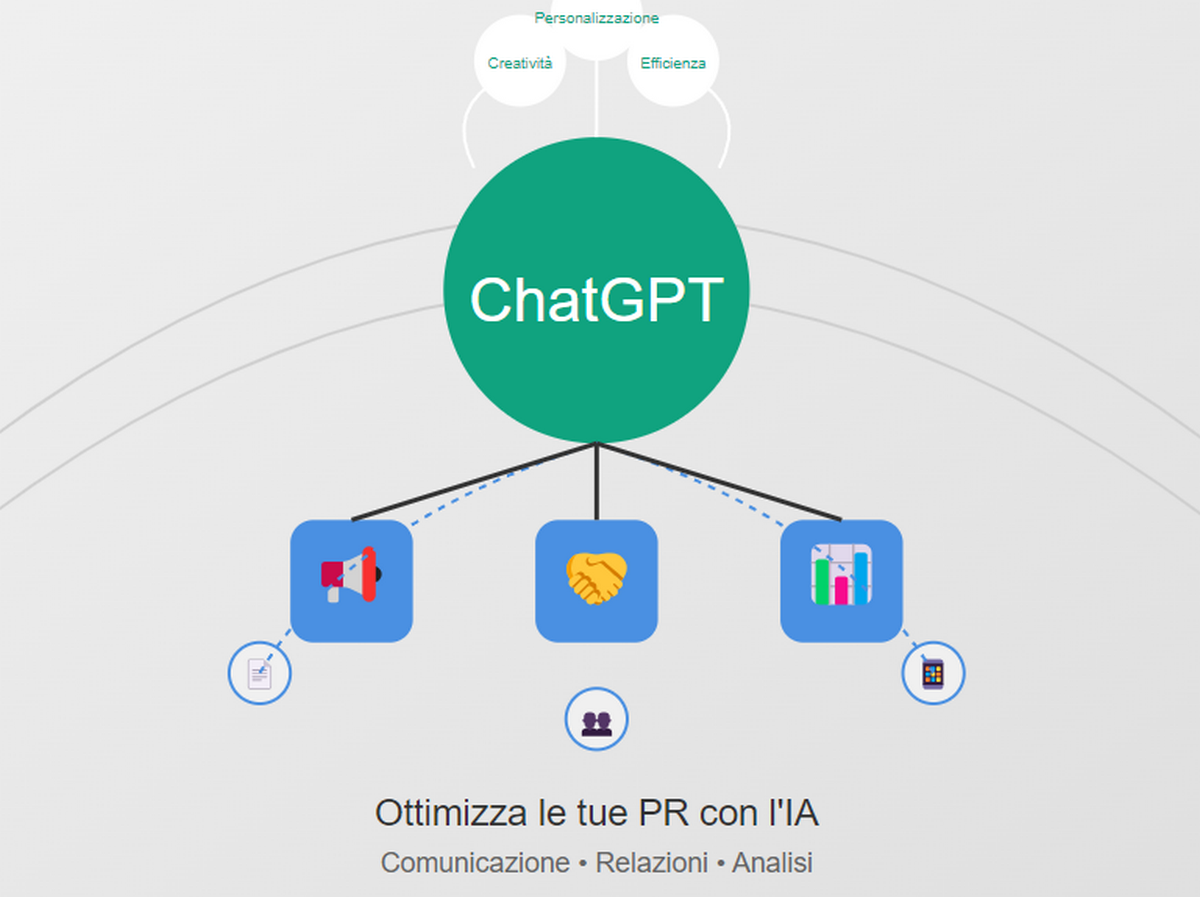

Although there have been somewhat alarmist reactions to ChatGPT, questioning whether the technology could end up replacing journalists, in reality the number of newsrooms using GenAI tools for article creation is relatively low. Instead, the main use case is the ability of these tools to digest and condense information—such as for summaries and bullet points, according to our respondents. Other key tasks for which journalists use the technology include research/simplified research, text correction, and workflow enhancement.

In the future, it’s likely that common use cases will evolve, as more newsrooms look for broader ways to leverage the technology and integrate it further into their operations. Our respondents highlighted personalization, translation, and a higher level of workflow/efficiency improvements as specific examples or areas where they expect GenAI to be more useful in the future .

Few newsrooms have guidelines for using GenAI

There’s a wide range of different practices regarding how the use of GenAI tools is monitored in newsrooms. For now, most publishers take a relaxed approach: nearly half of survey participants (49%) said their journalists are free to use the technology as they see fit. Additionally, 29% said they do not use GenAI.

Only a fifth of respondents (20%) said they have management guidelines on when and how to use GenAI tools, while 3% reported that the use of the technology is not allowed in their publications. As newsrooms grapple with the many and complex issues surrounding GenAI, it seems reasonable to expect that more publishers will establish specific AI policies on how to use the technology (or perhaps ban its use altogether).

Inaccuracies and plagiarism are the main concerns for newsrooms

Given that we have seen some cases where a news outlet published content created with the help of AI tools and which was later revealed to be false or inaccurate, it may not be surprising that the inaccuracy of information/content quality is the main concern among publishers when it comes to AI-generated content. 85% of respondents highlighted this as a specific issue related to GenAI.

Another concern for publishers involves plagiarism/copyright infringement issues, followed by data protection and privacy issues. It seems likely that the lack of clear guidelines (see previous point) only amplifies these uncertainties and that the development of AI policies should help alleviate them, along with staff training and open communication on the responsible use of GenAI tools.

Source: journalism.co.uk