It is well known that artificial intelligence suffers from deeply rooted problems when it comes to diversity: machine learning algorithms are trained on historical data that may incorporate institutional discrimination. NLP andAI generative models suffer from the same problems, and the industry itself faces a challenge related to diversity.

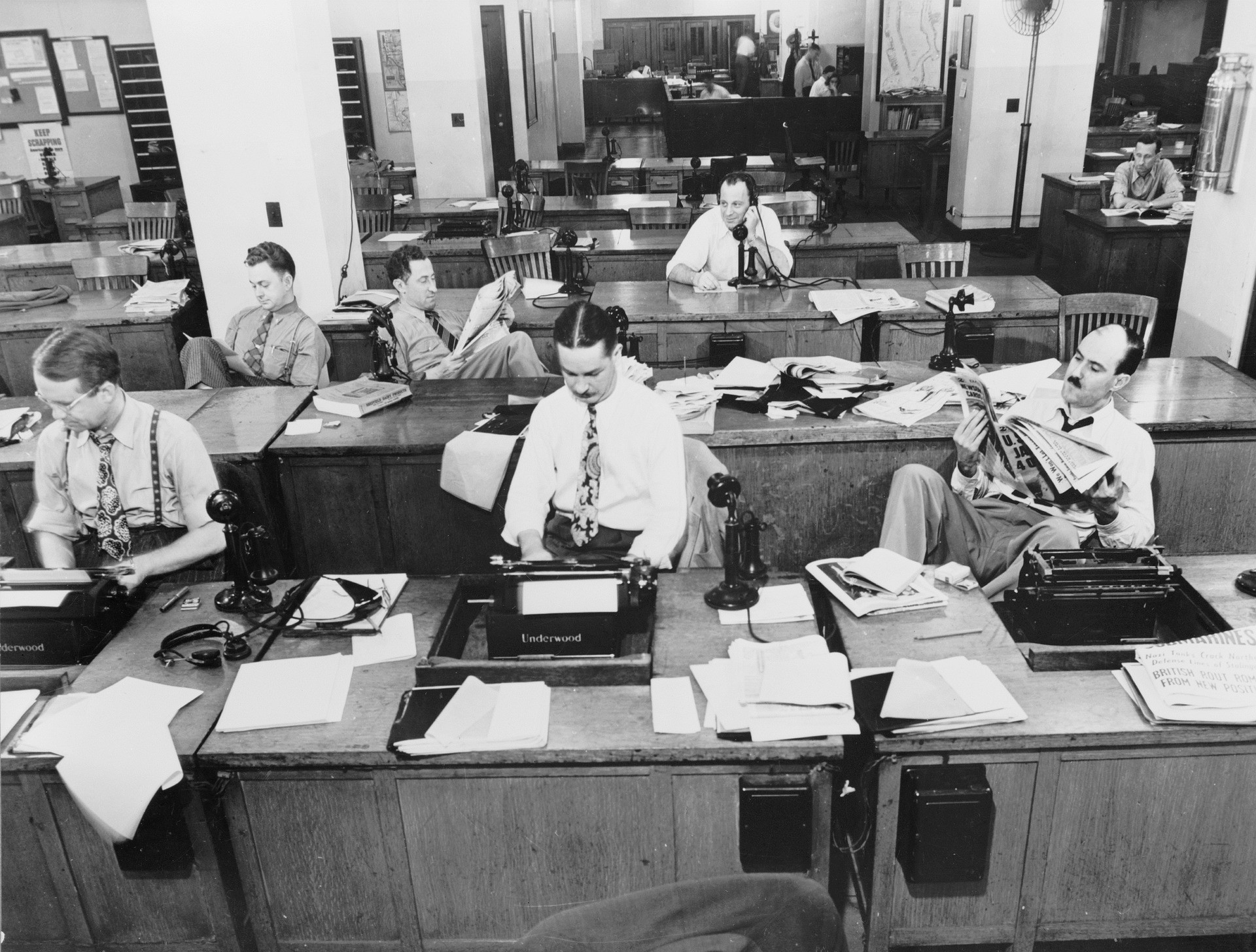

It is surprising, then, that the emerging discussion in the sector about generative artificial intelligence so far has failed to address these issues: the press release from the UK regulator Ofcom earlier this month does not mention it; nor do the new guidelines from the US Radio Television Digital News Association. The lessons from BuzzFeed and the discussion about its use of technology do not touch on the subject; CNET, despite being burned by the technology, does not mention biases in its policy.

Wired mentions biases in its statement on the use of generative artificial intelligence, but only in reference to content generation, not in the sections on idea generation or research. The closest I have seen an organization come is the mention of the Guardian’s recently published principles on “protection from bias” in general terms. Take a look at the main concerns about generative artificial intelligence identified in an industry survey conducted by WAN-IFRA and you will not see biases mentioned.

Six principles for responsible journalistic use of generative artificial intelligence and diversity and inclusion

The six principles for theresponsible journalistic use of generative artificial intelligence and diversity and inclusion are aimed at providing a starting point for discussing how we can ensure that new practices and workflows involving generative artificial intelligence do not repeat the mistakes of the past, as well as ensuring that journalists have some practical advice on how to start experimenting with generative tools.

The principles are:

- Be aware of inherent biases: it is easy to forget that generative artificial intelligence is inherently biased, like any source. We must ensure that journalists start from this, which leads to…

- Incorporate diversity into your prompts: good prompt engineering is essential for effective use of artificial intelligence and, just like good journalists know how to ask good questions, they should also know how to write good prompts, and this includes ensuring that diversity is actively promoted.

- Recognize the importance of original material and references: this is another familiar journalistic skill: we should always know where the information comes from and ensure those sources do not represent only the loudest voices. For example, when asked “Who are the twenty most important actors of the 20th century?” ChatGPT did not name a single actor of color; when asked about Winston Churchill or the American founding fathers, it did not include important critical facts. We would reject these answers from humans, so it is important to do the same with generative artificial intelligence.

- Report errors and biases: artificial intelligence is still learning: it is important to understand how your use reflects this.

- GAI-generated text should be viewed with journalistic skepticism: once again, here we are just emphasizing a fundamental journalistic trait (I would say that the ability of generative artificial intelligence to generate text will prove to be one of its least useful applications).

- Be transparent where appropriate: the range of applications for artificial intelligence is so broad that it’s hard to say you should always be transparent, but it should certainly be a consideration.

Source onlinejournalismblog